The Hidden Cost of the AI GTM Stack

Why AI tools are forcing SaaS companies to rethink pricing, and what this means for GTM teams building automation-heavy workflows.

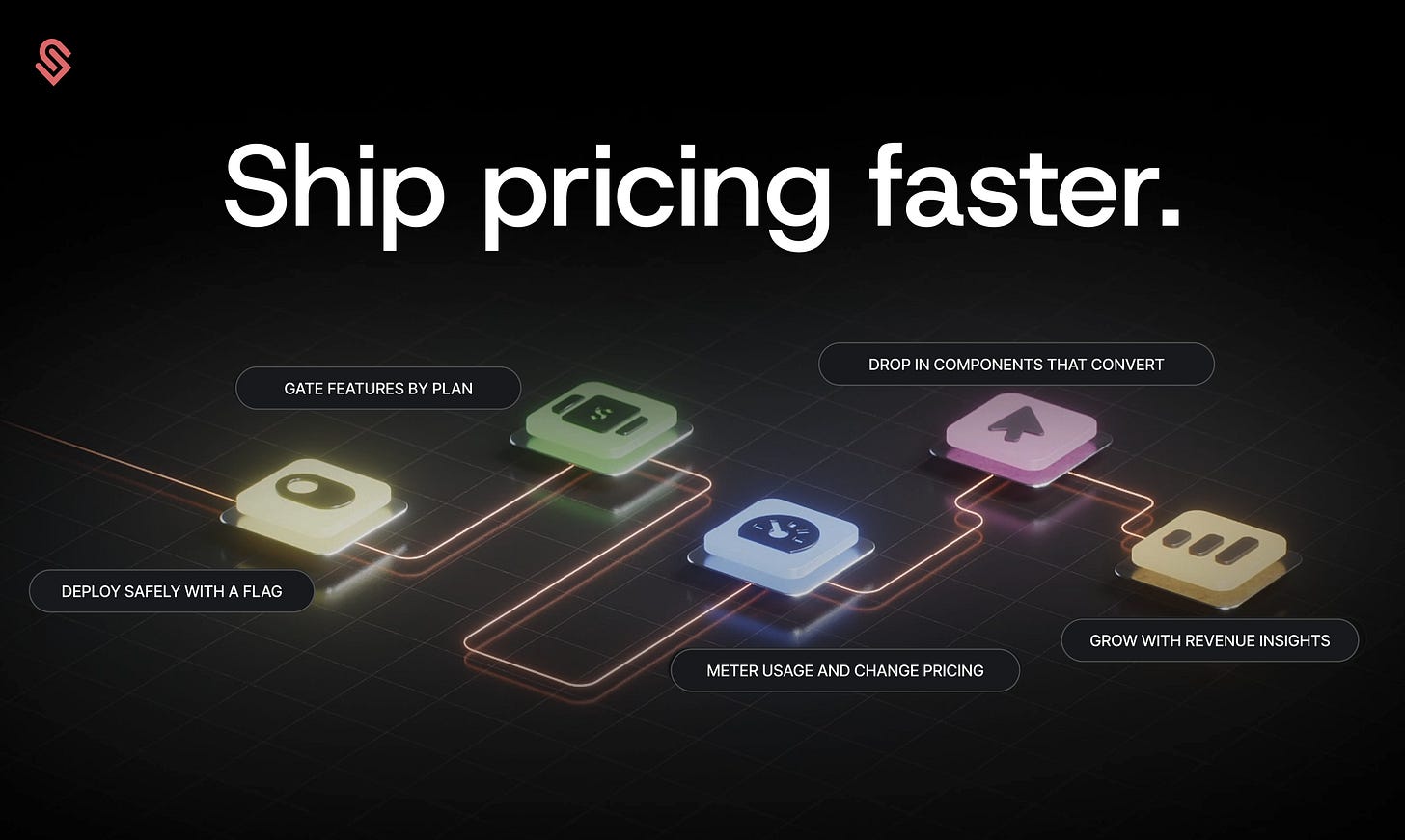

This post is supported by Schematic — monetization infrastructure for product & engineering teams.

Schematic decouples billing from code, giving product teams direct control over pricing, packaging, limits, add-ons, credits, trials, migrations, and upgrades — without burdening engineering.

The result: faster pricing experiments, better conversion, more upgrades, and less revenue leakage. Works natively with Stripe Billing. Used by high-growth teams like Plotly, Pagos, TightKnit, and Zep.

Dear GTM Strategist,

Everyone talks about how AI will make GTM cheaper because you can just vibe code your own tool and cancel expensive subscriptions. But an interesting trend is happening instead.

AI tools are becoming more expensive to run, not less. And the reason has very little to do with pricing decisions. It has to do with how AI software actually works.

Clay’s recent pricing change is a good example.

When they announced the update, reactions were all over the place. Some users celebrated the fact that data costs dropped significantly. Others quickly calculated that the same workflows would now cost much more.

Both reactions were technically true.

Clay did not simply change prices. They changed how costs are exposed inside the product.

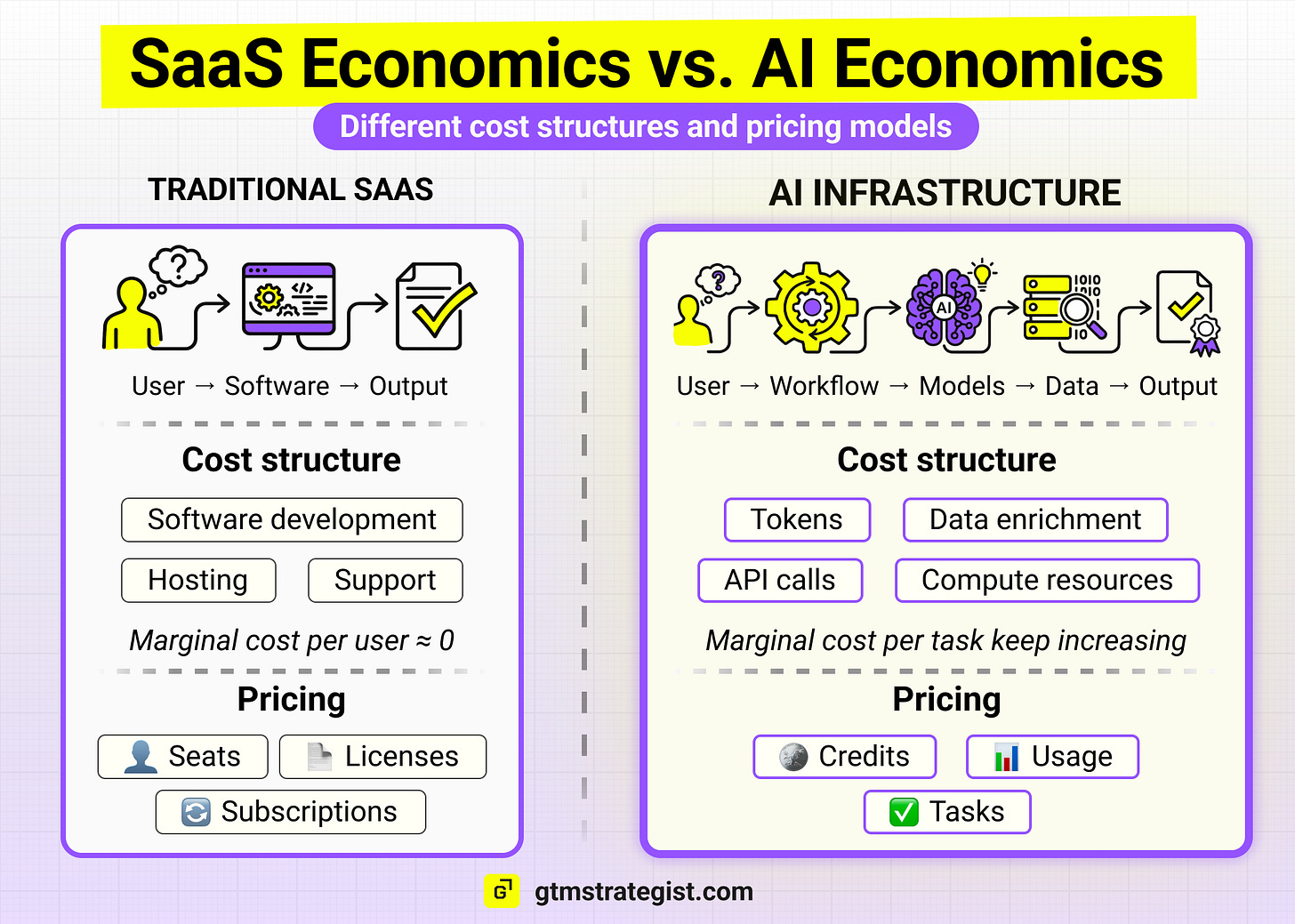

For the past two decades, SaaS followed a predictable model. Companies sold access to software, usually priced per seat, while the cost of serving each additional user was close to zero.

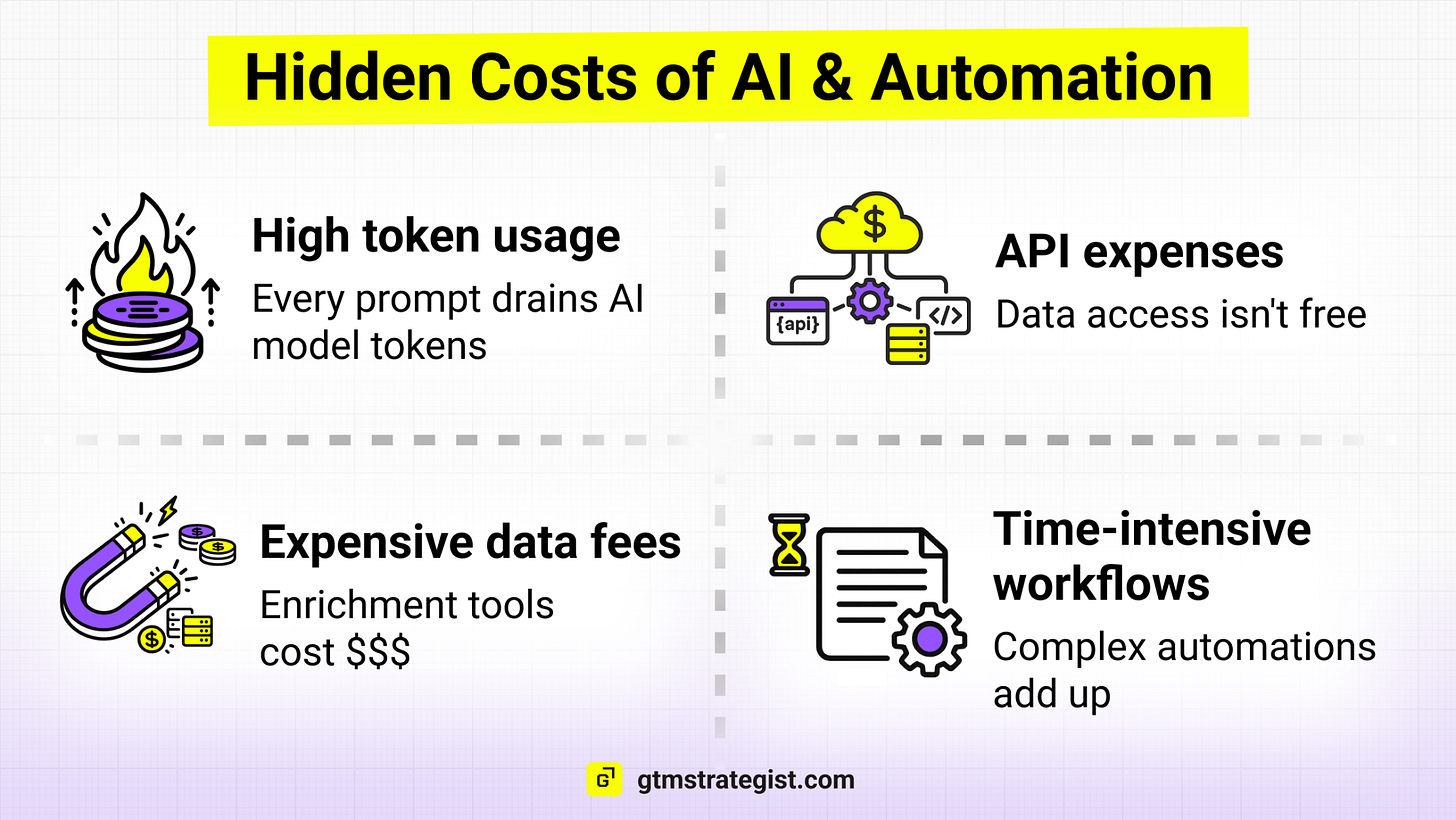

AI tools break that model. Every AI-powered workflow consumes something real behind the scenes. Tokens. API calls. Model inference. Compute.

As usage grows, so do the underlying costs. Which means the economics of AI products look very different from traditional SaaS.

Clay’s pricing change simply makes that reality visible. And it highlights a much bigger shift happening across the AI software landscape.

In this post, we will look at:

Why AI tools are forcing new pricing models

Why credits and usage-based pricing are spreading across SaaS

How teams are tackling variable costs of AI tools

and what this means for the future economics of AI-powered GTM stacks

Let’s get into it.

Clay’s Pricing Change Is a Signal

Clay introduced a new pricing mode last weekl. At first glance, the announcement sounded simple. Data costs inside the platform dropped significantly. In many cases, the reduction was between 50 and 90 percent.

That part was easy to understand. Data enrichment has always been one of the most expensive parts of running Clay workflows, so cheaper data was welcomed by many users.

But the rest of the update revealed something more interesting.

Clay separated the costs of two things that used to be bundled together:

Data credits

Workflow actions (platform usage)

Data credits now pay for enrichment and external sources. Actions pay for running workflows and executing tasks inside the platform.

In other words, Clay is no longer charging only for access to the product. It is charging for the work the product performs. As Kyle Poyar pointed out in his analysis, this pricing now splits out the value/platform piece (high margin) vs. AI tokens (low margin).

The rollout itself was a masterclass in comms: coordinated internal/external messaging, CEO posts, video walkthroughs, and all existing customers grandfathered on the legacy model.

It created two very different reactions in the community.

Some users calculated that their workflows would now be cheaper because enrichment costs dropped so much. Others realized that heavy automation would now generate higher bills.

Both interpretations were correct. It also depends on how they are using Clay.

The pricing model did not simply go up or down. It exposed where the real costs actually sit. And those costs are not primarily inside the Clay product itself. They come from everything happening underneath the interface.

Every automated workflow triggers multiple external services. Data providers charge per record. AI models charge per token. Infrastructure charges for compute and storage.

The more work the workflow performs, the more resources it consumes. Traditional SaaS products could hide those costs because they were small and predictable.

AI products cannot.

Clay’s pricing update simply makes those economics visible.

AI Products Cannot Be Priced Like Traditional SaaS

To understand why Clay made this change, it helps to zoom out. For most of the past two decades, SaaS companies followed a predictable model.

They sold access to a product, usually priced per seat or per account. Once the software was built, the cost of serving each additional user was relatively small.

That is why SaaS margins became so attractive. Revenue scaled faster than costs and ARR (Annual Recurring Revenue) was the name of the game.

AI products behave differently. Every time a user runs an AI workflow, the workflow consumes AI tokens. Those costs do not disappear as usage grows. In many cases they grow directly with usage.

This is one reason pricing teams have become unusually active recently. According to the PricingSaaS Trends Report, pricing changes across SaaS companies increased roughly 15 percent year over year, while packaging changes increased more than 20 percent as companies experimented with new pricing structures.

One trend stands out in particular: credit-based pricing models grew more than 120 percent.

Credits allow companies to connect price more closely to consumption. Instead of charging only for access to the product, they charge for the work the product performs.

This shift is already visible across many AI products. Figma introduced AI credits for certain features. Data platforms charge per request or per record processed. Some AI assistants are priced per resolution or per automated task.

Kyle Poyar recently described this shift as moving from selling software access to selling completed work. He also analyzed how

The pricing unit is no longer a seat. It is the task the system performs. And this is reflected in AI-based pricing models.

The Hidden Cost of the AI GTM Stack

Once you start looking at AI tools through the lens of usage, something else becomes visible. Most GTM teams are no longer buying a single product.

They are assembling a stack of services that work together.

A typical outbound workflow today might look simple on the surface.

A contact gets enriched.

The company is analyzed.

A personalized message is generated.

But behind the scenes, that workflow may trigger several systems.

An LLM analyzes the company or contact. Another model generates the message. Automation tools move the data between systems, and users can “bring their own keys” - connect the their preferred tools with API keys to source the data directly.

Each of those steps has a cost attached to it.

Data providers charge per record.

AI models charge per token.

Infrastructure charges for compute.

None of those costs is visible when the workflow is first built. They only appear once the system starts running at scale. This is why many teams are beginning to notice their AI budgets growing faster than expected. As 2026 SaaS Management Index shows, 61% of IT leaders have already cut projects due to unplanned SaaS cost increases.

A workflow that feels inexpensive during testing can become costly when it runs thousands of times per week. At that point the GTM stack starts to behave less like traditional software and more like infrastructure.

And infrastructure is almost never priced per seat.

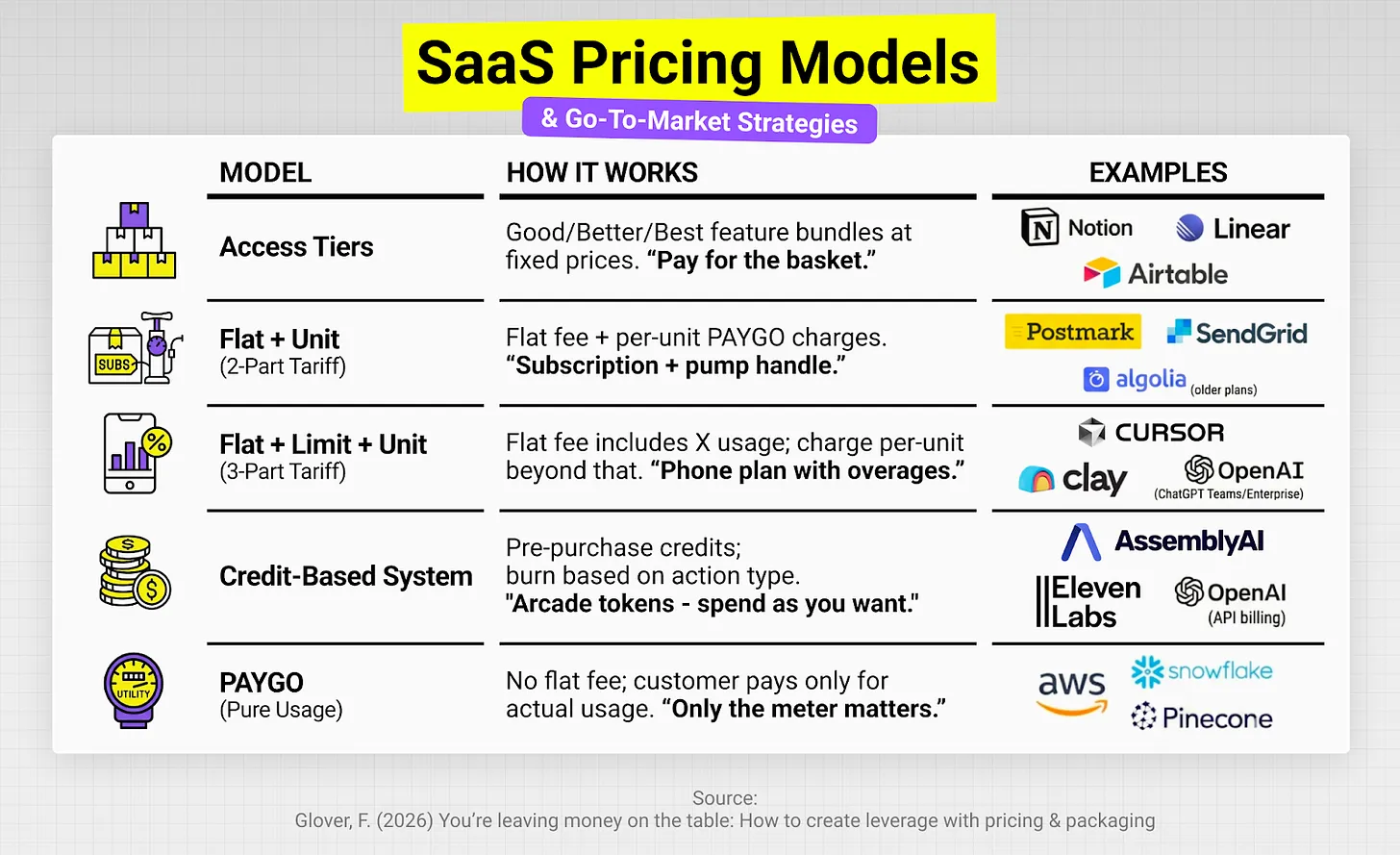

Pricing Models Are Starting to Reflect This Reality

Once you understand the economics behind AI products, the recent wave of pricing changes makes sense. Companies are searching for ways to connect pricing to the actual work their systems perform.

Several models are emerging.

Some companies introduce monthly credit allowances that reset each billing cycle. Others charge once usage exceeds predefined limits.

Hybrid models are also becoming common. A base subscription provides access to the product, while usage-based components capture the cost of AI features.

A few companies are going even further and pricing based on outcomes.

Support platforms charge for resolved conversations. Automation platforms charge for executed workflows. AI assistants charge for generated outputs. All of these approaches attempt to answer the same question.

What is the most honest unit of value for AI software?

The industry has not settled on a single answer yet - ideally completed outcomes, but that’s not always feasible to measure. However, one thing is becoming clear. Seat-based pricing alone will not capture the economics of AI systems where usage directly drives cost.

How Top GTM Teams Are Tackling AI Costs

I asked world-class companies in my network what the variable and sometimes unpredictable AI costs mean for them and how they are tackling this.

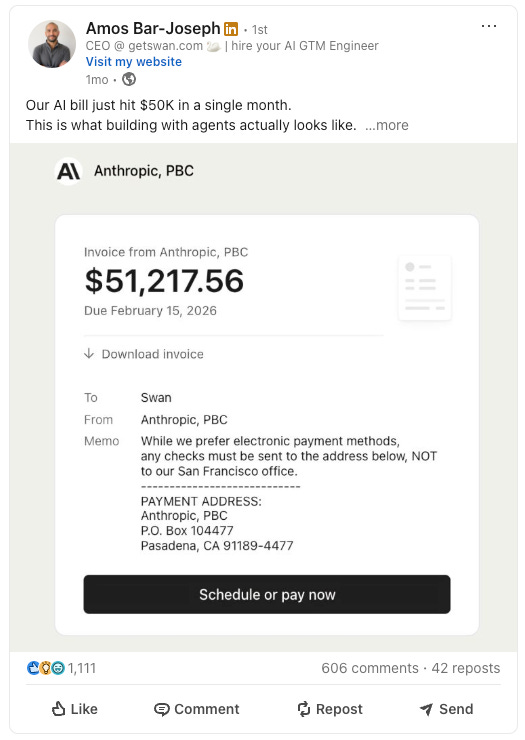

Amos-Bar Joseph, CEO and co-founder of Swan AI, famously shared his $50K monthly Anthropic bill.

However, a month later, he shared how he optimized the costs - slashed the bill to $27K while doubling AI usage (Swan grew from 200 to 400 customers that month).

What changed? Swan’s AI agent had been operating through a translation layer - converting every action into human-language instructions that then had to be decoded into code. By removing that layer and rebuilding the agent to operate directly in code - calling APIs and databases natively - they cut costs by nearly half while doubling usage.

The lesson from Amos: the biggest cost savings don’t come from prompt optimization or model switching, but from rethinking the AI architecture itself.

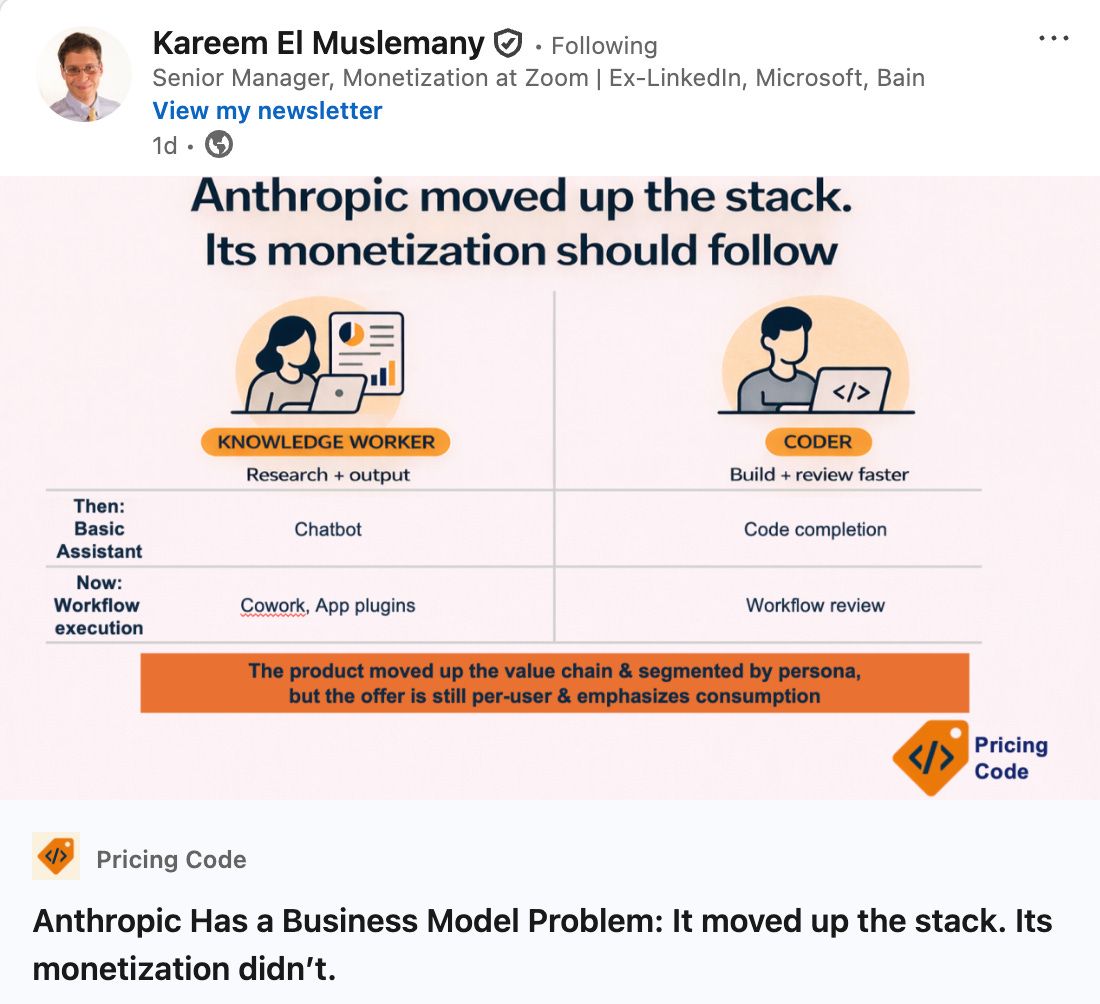

Kareem El Muslemany (senior monetization manager at Zoom and author of Pricing Code) highlighted that AI is novel, and costs can escalate unpredictably - 80% of companies in a recent survey were 25% or more over their AI budget. Customers can use cost and ROI calculators from vendors to budget for AI costs. Variable costs can be optimized by using lower-cost services or AI models when they suffice, using internal data/tools, and having fewer steps in workflows. As a final check, spend caps can be implemented to avoid unplanned overages.

But as Kareem also points out, pricing leaders should be mindful of pure-token costs. In his post, he describes that for pure coders using Claude’s API alone, raw token costs don’t always capture the true value. Code Review, one of its highest-value developer workflows so far, is billed on token usage at $15–25 per review. That logic made sense when Claude was mainly infrastructure, but Code Review is valuable IP. Monetizing on credits alone undersells its incremental value.

Fynn Glover, co-founder and CEO of Schematic, said that how you price against rising AI costs is a strategy question. “It depends entirely on two things: your capitalization and your pricing philosophy based on your stage and your capitalization.”

If you’re well-funded and in a land grab, your philosophy might rightly be, “for now, let’s not let input costs drive our pricing.”

If you’re bootstrapped or undercapitalized and focused on demonstrating pricing power, then yes, cost discipline matters a lot.

“The mistake I see most often: companies defaulting to cost-plus pricing because their AI costs are rising. I think classic pricing theory is quite instructive here. The moment you anchor your price to your costs instead of your customer’s value, you’ve told the market exactly how to think about you and it’s not favorably. Cost-plus can work for pass-through add-ons. For your core product? It erodes perceived value and gives up pricing power,” said Fynn.

The New Skill GTM Teams Will Need

This shift creates a new challenge for GTM teams. The efficiency of AI workflows suddenly matters.

In the past, teams rarely needed to think about the internal mechanics of the tools they used. You bought the software, added users, and focused on adoption. With AI systems, the structure of the workflow itself becomes important.

A poorly designed workflow may trigger unnecessary enrichment calls. Prompts may be longer than needed. Automation loops may multiply the number of model calls.

None of this is visible in the interface. But it shows up very clearly in usage-based pricing.

This is why some teams are starting to treat AI workflows almost like engineering systems. They monitor how often processes run. They optimize prompts to reduce token usage. They simplify automation chains to avoid unnecessary calls.

The goal is not only to build workflows that work. The goal is to build workflows that work efficiently.

That is a new skill set for most GTM teams.

It sits somewhere between operations, data, and product thinking. Teams that learn to design efficient workflows will get more output from the same tools. And over time, that advantage compounds.

AI software is changing the economics of the SaaS model. The future GTM stack will not only be evaluated based on features.

It will also be evaluated based on how efficiently it converts compute into outcomes.

The teams that understand those economics early will build systems that scale without surprises.

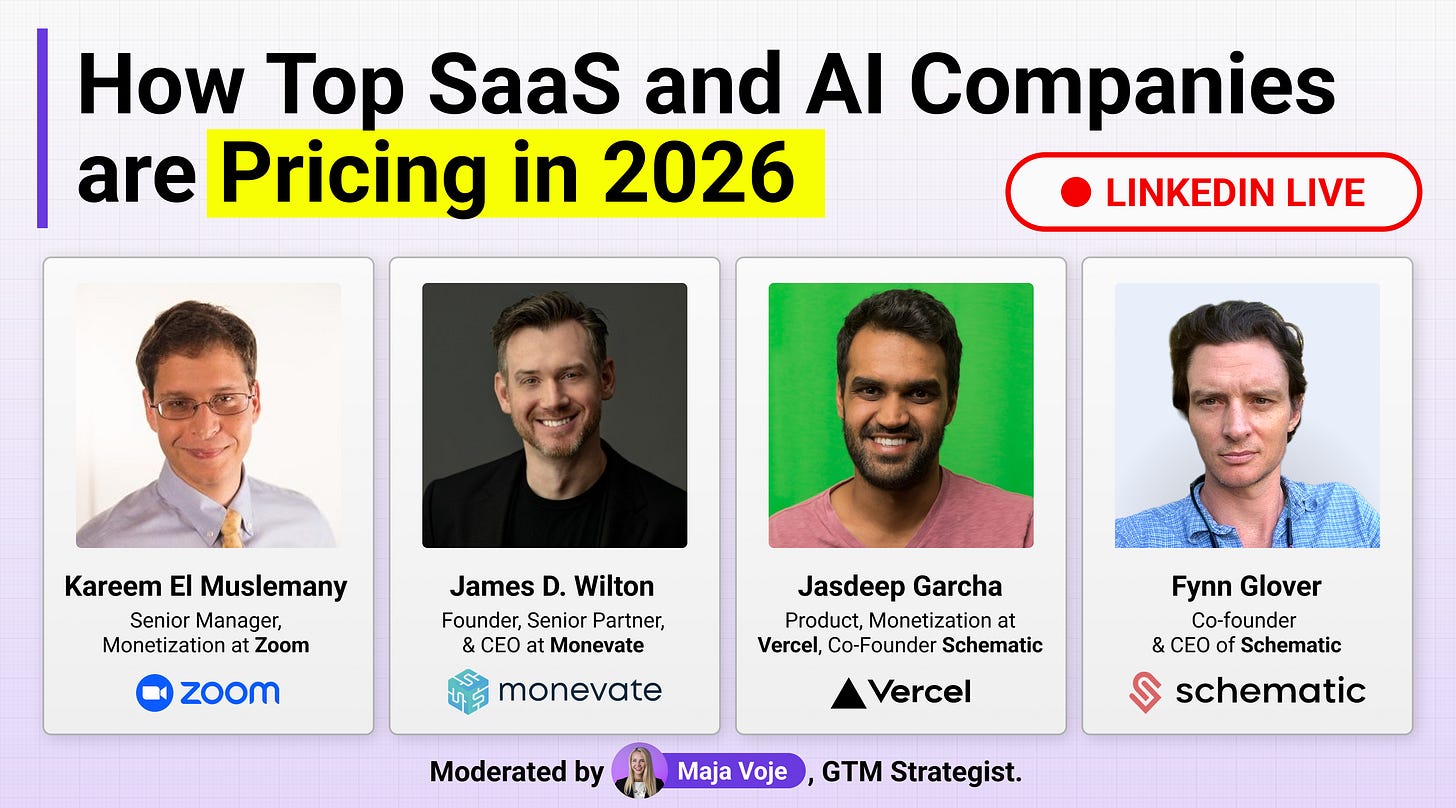

To hear directly from top pricing experts how they’re tackling AI monetization in 2026, join me on March 26 for a livestream with Kareem El Muslemany (Monetization at Zoom), James D. Wilton (Monevate, ex-McKinsey, author of Capturing Value), Jasdeep Garcha (Product & Monetization at Vercel), and Fynn Glover (CEO, Schematic).

We’ll dig into credits vs. usage, predictability vs. alignment, outcome-based vs. output-based pricing, and the growing gap between the value your AI product creates and the revenue you actually capture. No slides, no pitches - just what’s working and what’s not.

✅ Need ready-to-use GTM assets and AI prompts? Get the 100-Step GTM Checklist with proven website templates, sales decks, landing pages, outbound sequences, LinkedIn post frameworks, email sequences, and 20+ workshops you can immediately run with your team.

📘 New to GTM? Learn fundamentals. Get my best-selling GTM Strategist book that helped 9,500+ companies to go to market with confidence - frameworks and online course included.

📈 My latest course: AI-Powered LinkedIn Growth System teaches the exact system I use to generate 7M+ impressions a year and 70% of my B2B pipeline.

🏅 Are you in charge of GTM and responsible for leading others? Grab the GTM Masterclass (6 hours of training, end-to-end GTM explained on examples, guided workshops) to get your team up and running in no time.

🤝 Want to work together? ⏩ Check out the options and let me know how we can join forces.

Strong and timely analysis. Thanks for sharing. The shift from seat-based SaaS to usage and outcome-based economics is one of the biggest structural changes happening in GTM right now, and many teams are still underestimating its impact.

Hi Maja! Thanks for the post and the thoughts, very inciting…🧠🔥

I love this concept, and am looking at optionality to identify right pricing models: where’s the value unlock.

The shift to outcome-based pricing assumes you can actually measure the outcome, and that's where most teams get stuck. The value of a resolved support ticket is easy to quantify. The value of better market intelligence or a stronger pitch? Much harder.

Until measurement catches up, usage-based pricing is the practical middle ground, not the destination.